Run multiple benchmarks to stress system performance and assess the hardware capabilities of your computer using this portable tool. #Memory benchmark #System benchmark #Performance benchmark #Benchmark #Performance #Chache

Science Mark is a feather-light, portable and powerful application that contains several benchmark tests to help you assess the hardware capabilities of your computer.

This kind of software comes to your aid if you're thinking about upgrading any parts of the computer, or even for troubleshooting purposes if a device is not working properly.

Since installation is not necessary, you can save the program files anywhere on the disk and just click the executable to launch Science Mark. Otherwise, you can save it to a removable storage unit to directly run it on any PC without installing anything beforehand. It doesn't add new entries to the Windows registry.

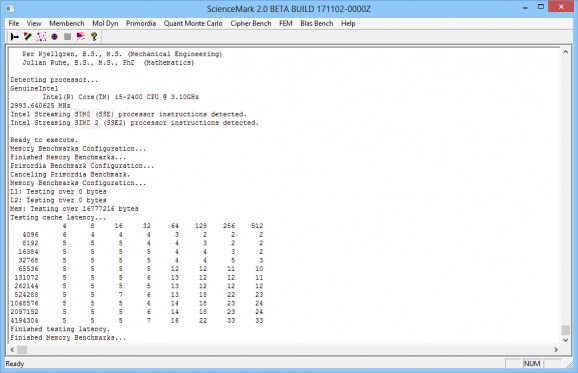

Science Mark is wrapped in a classical interface made from a common window and well-structured layout, where you can select the benchmarks to run.

Thus, you can test the memory, molecular dynamics, Primordia (select the target atom and simulation characteristics), Quantum Monte Carlo, cipher, FEM (Finite Element Method), SGEMM (Single Precision Matrix Multiply) or DGEMM (Double Precision Matrix Multiply) by setting the BLAS controls.

Each type of benchmark comes with its own set of configuration parameters. For example, if you want to run the molecular dynamics test, you can either proceed with a default simulation or specify the number of cells, temperature, time steps, length of time step, cube type, and positions.

Unfortunately, the app doesn't integrate options for exporting benchmark results to file or copying them to the Clipboard. On the other hand, we must take into account the fact that Science Mark is a very old tool that hasn't been updated for a long time. Nevertheless, you can give it a shot.

Science Mark 2.0 Build 171102-0000Z Beta

add to watchlist add to download basket send us an update REPORT- runs on:

- Windows All

- file size:

- 280 KB

- filename:

- ScienceMark2_17NOV02_EXECUTABLE.zip

- main category:

- System

- developer:

- visit homepage

4k Video Downloader

IrfanView

Zoom Client

calibre

Bitdefender Antivirus Free

paint.net

ShareX

7-Zip

Microsoft Teams

Windows Sandbox Launcher

- 7-Zip

- Microsoft Teams

- Windows Sandbox Launcher

- 4k Video Downloader

- IrfanView

- Zoom Client

- calibre

- Bitdefender Antivirus Free

- paint.net

- ShareX